TL;DR:

- AI anomaly detection achieves up to 96% accuracy, surpassing conventional security methods.

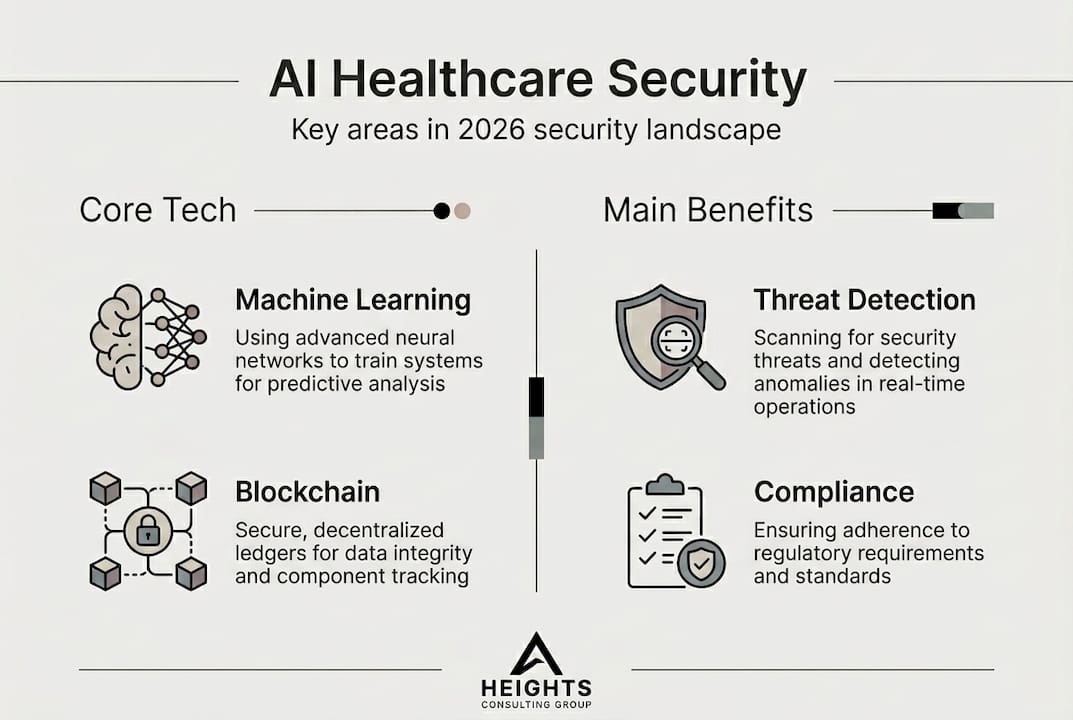

- Multi-layered security combines AI, cryptography, and blockchain to strengthen healthcare defenses.

- Human oversight remains essential to manage AI risks like false positives and bias in healthcare security.

Many healthcare executives still believe that skilled security teams, rigorous protocols, and periodic audits are enough to protect patient data. That assumption is increasingly difficult to defend. AI anomaly detection achieves 96% accuracy in healthcare security environments, outpacing conventional methods by a wide margin. For compliance officers managing HIPAA obligations, third-party vendor risk, and an expanding attack surface driven by connected medical devices, that number is not just impressive. It is a signal that the rules of the game have fundamentally changed.

Table of Contents

- Understanding the AI landscape in healthcare security

- How AI detects threats and prevents breaches

- Advanced frameworks: AI, cryptography, and blockchain in defense

- The limits, risks, and regulatory impacts of AI in healthcare security

- What most executives miss: The real balancing act

- Connect AI strategies to actionable solutions with Heights CG

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| AI delivers real-time threat detection | Machine learning models in healthcare security enable continuous monitoring and rapid breach response. |

| Advanced frameworks boost resilience | Combining AI anomaly detection with blockchain and quantum-resistant cryptography increases defenses against evolving cyber threats. |

| Human oversight remains essential | Executive guidance and compliance strategies are critical to counter AI limitations and ensure regulatory adherence. |

| Deployment faces ethical and data hurdles | Implementing AI in healthcare security requires addressing bias, data scarcity, and regulatory complexities. |

Understanding the AI landscape in healthcare security

Artificial intelligence in healthcare cybersecurity is not a single tool. It is an architecture, a way of operationalizing security through automation, real-time analytics, and adaptive response. Where traditional security relies on static rule sets and signature-based detection, AI-enabled systems learn from data patterns and adjust continuously. The distinction matters enormously in a healthcare environment where the threat landscape evolves faster than any manual update cycle can track.

At the core of this shift are machine learning models purpose-built for anomaly detection and classification. ML models including GANs, VAEs, Random Forest, SVM, and LSTM are actively deployed across healthcare networks to identify suspicious behavior, flag unusual data access patterns, and surface potential intrusions before they escalate. Each model has a distinct strength. Random Forest handles high-dimensional data efficiently. LSTM networks excel at detecting sequential behavioral anomalies over time. Generative Adversarial Networks (GANs) and Variational Autoencoders (VAEs) are particularly effective at modeling normal system behavior so that deviations register quickly.

The contrast with traditional methods is stark. Consider the following:

| Capability | Traditional security | AI-enabled security |

|---|---|---|

| Threat detection speed | Hours to days | Seconds to minutes |

| Detection accuracy | Moderate, rule-dependent | Up to 96% or higher |

| Adaptability | Manual updates required | Continuous self-learning |

| IoMT device monitoring | Limited | Real-time, device-aware |

| False positive management | High volume, manual triage | Reduced through model tuning |

Key characteristics that define AI-driven healthcare security include:

- Automated behavioral baselining across users, devices, and network segments

- Real-time event correlation that connects signals across disparate systems

- Adaptive alerting thresholds that reduce alert fatigue for security operations teams

- Integration with Internet of Medical Things (IoMT) devices, which traditional tools often cannot monitor effectively

For executives building or evaluating AI in healthcare cybersecurity strategies, understanding these models is not about becoming a data scientist. It is about knowing what questions to ask your security team and which capabilities to prioritize in vendor evaluations. Reviewing AI security best practices can help frame those conversations with precision.

How AI detects threats and prevents breaches

The mechanics of AI-driven threat detection in a hospital network follow a disciplined, layered process. Understanding each stage helps compliance officers and security leaders evaluate whether their current environment is truly protected or simply monitored.

Here is how the detection process typically unfolds:

- Data ingestion: AI systems continuously collect logs, network traffic, device telemetry, and user activity across the environment.

- Baseline modeling: Machine learning algorithms establish what normal looks like for each asset, user role, and system segment.

- Anomaly scoring: When behavior deviates from the baseline, the system assigns a risk score and flags it for further analysis.

- Contextual correlation: The AI cross-references the anomaly against known threat signatures and historical patterns to determine severity.

- Automated or guided response: Depending on configuration, the system either initiates a containment action or escalates to a human analyst with full context.

The performance benchmarks behind this process are compelling. AI models achieve 96% accuracy, 94% precision, 93% recall, and a 0.94 F1-score in healthcare cybersecurity environments. On IoMT-specific data, accuracy reaches as high as 99.9%. These are not theoretical figures. They reflect tested performance against real healthcare network datasets.

However, a critical caveat exists. Empirical benchmarks of AI ensembles in IoMT show near-perfect detection rates in controlled settings, but real-world deployment frequently lags behind. Data scarcity in niche device categories, inherent bias in training datasets, and the complexity of integrating AI into legacy hospital infrastructure all contribute to a gap between laboratory performance and operational reality.

Statistic to note: AI ensemble models tested on IoMT data achieve accuracy as high as 99.9%, yet fewer than half of healthcare organizations have deployed AI-native threat detection across their full device inventory.

Pro Tip: When deploying AI security tools, prioritize vendors who provide transparency into training data composition and model bias assessments. A model trained on data that does not reflect your patient population or device mix will underperform in ways that are difficult to diagnose without that transparency. Review your organization's AI security governance playbook to set the right internal standards before deployment.

Advanced frameworks: AI, cryptography, and blockchain in defense

Threat detection alone is not sufficient when adversaries are increasingly sophisticated and patient data is among the most valuable targets in any sector. Forward-thinking healthcare organizations are moving toward multi-layered security architectures that combine AI detection with quantum-resistant cryptography and blockchain-based data integrity mechanisms.

Multi-layered frameworks integrate AI anomaly detection with quantum-resistant cryptography and blockchain to create defense systems that are resilient even against AI-powered attacks. This is a meaningful architectural leap. Each layer addresses a different threat vector:

- AI anomaly detection identifies behavioral threats in real time across network and device activity

- Quantum-resistant cryptography protects data at rest and in transit against next-generation decryption threats

- Blockchain integrity verification creates immutable audit trails for patient records, access logs, and system changes

- Zero-trust network access (ZTNA) controls lateral movement across clinical and administrative environments

- Federated learning enables AI models to train across distributed hospital systems without exposing raw patient data

The integration of these components is not simply additive. It is multiplicative in effect. An attacker who circumvents one layer encounters independent controls at every subsequent stage. This architecture significantly raises the cost and complexity of a successful breach.

"The question is not whether to adopt AI in your security stack. It is whether your AI layer is reinforced by controls that can absorb what AI misses."

Pro Tip: When building or auditing your multi-layered framework, evaluate zero-day vulnerability coverage explicitly. AI models excel at detecting known and behavioral anomalies but may miss novel exploit techniques. Supplement AI detection with threat intelligence feeds and red team exercises that specifically target your cryptographic and blockchain components. Exploring enhanced cybersecurity solutions can help identify gaps before adversaries do. Consulting an AI compliance playbook provides a structured path for embedding these frameworks within existing governance structures.

The limits, risks, and regulatory impacts of AI in healthcare security

No technology is without tradeoffs, and AI in healthcare security carries risks that executives cannot afford to treat as secondary concerns. Understanding them clearly is a prerequisite for responsible deployment.

AI outperforms traditional methods in speed, scalability, and adaptability but requires meaningful human oversight to manage false positives and ethical complications. In a hospital setting, a false positive that flags a legitimate clinician's access as suspicious can disrupt care delivery. Too many false positives erode trust in the system among the clinical staff who need to act on alerts.

Key risks to assess include:

- False positives and alert fatigue: High-volume, low-precision alerts desensitize security teams and slow incident response

- Training data bias: Models trained on unrepresentative datasets may underperform for specific patient populations or device types

- Attacker adaptation: Adversaries are actively developing AI-powered polymorphic attacks designed to evade detection

- Model opacity: Black-box AI decisions complicate accountability, particularly under HIPAA audit requirements

- Third-party AI vendor risk: Delegating detection to a vendor without visibility into their model updates introduces unmanaged dependencies

From a regulatory standpoint, the stakes are high. HIPAA requires documented controls and clear accountability for how protected health information is accessed and safeguarded. GDPR adds consent and data minimization obligations for organizations serving European patients. Neither framework was written with AI-native security systems in mind, which means compliance officers must actively interpret guidance and document AI decision-making processes.

"Regulatory bodies are watching how healthcare organizations govern their AI tools. The organizations that document their oversight structures today will be far better positioned when formal AI compliance standards arrive."

Pro Tip: Establish a formal human-in-the-loop review protocol for all AI-generated security decisions above a defined risk threshold. This creates an auditable trail that satisfies both HIPAA documentation requirements and emerging AI governance expectations. The AI compliance strategy guide offers a structured framework for operationalizing this approach within regulated healthcare environments.

What most executives miss: The real balancing act

The conversation around AI in healthcare security tends to focus on performance metrics, and understandably so. A 96% detection accuracy rate is genuinely impressive. But the executives who treat AI as a finished solution rather than a powerful component are the ones most exposed.

AI enables advanced defenses, but attackers use AI for polymorphic attacks, which means the technological advantage is not permanent. The moment your AI model becomes predictable, it becomes exploitable. Adversaries are not passive. They study your detection patterns and probe for blind spots.

The real balancing act is not between AI and traditional security. It is between automation speed and human judgment. The organizations building genuine cyber resilience are the ones designing adaptive, human-in-the-loop systems where experienced analysts guide AI, not just monitor it. That requires executive leadership, not just security team execution. Leaders who want to understand where their strategy stands should review the board guide to AI risk to ground those conversations in operational reality.

Connect AI strategies to actionable solutions with Heights CG

Understanding AI's role in healthcare security is a start. Translating that understanding into a resilient, compliant security program requires structured guidance and experienced execution.

Heights Consulting Group works with healthcare organizations and compliance teams to build AI-informed security strategies grounded in frameworks like NIST, HIPAA, and CMMC. From threat detection architecture to healthcare compliance frameworks and managed security services, we provide the strategic and technical depth your organization needs. If you are ready to move beyond awareness and into action, explore how cybersecurity business transformation becomes a competitive advantage. Reach out to our team to begin the conversation.

Frequently asked questions

How does AI outperform traditional healthcare security methods?

AI delivers faster, more accurate threat detection by continuously learning from network behavior rather than relying on static rules. AI achieves 96% accuracy, 94% precision and a 0.94 F1-score in healthcare cybersecurity, a level of performance that signature-based tools cannot match at scale.

What are the main risks of using AI in healthcare security?

Key risks include false positives that disrupt clinical workflows, data bias that reduces model accuracy for certain device types or populations, and adversarial attacks where AI requires oversight for false positives and polymorphic threats designed to evade detection.

Can AI security tools be integrated with compliance frameworks?

Yes. Multi-layered frameworks integrate AI anomaly detection with quantum-resistant cryptography and blockchain to meet regulatory requirements like HIPAA and GDPR while providing robust protection for sensitive patient data.

What is the biggest challenge in deploying AI security for healthcare?

Real-world deployment lags due to data scarcity and bias in training datasets, combined with the ongoing need for human oversight to validate AI decisions in complex, regulated clinical environments.